NOTE: Chris Hill wrote a very detailed post on Reddit with more information on ReFS with integrity streams, and also created a pretty impressive automated PowerShell script. His results are very concerning, and I’d advise to not trust ReFS until further notice. I will be redoing my testing and will update the post once we get to the bottom of this. See his comment below, or the Reddit thread in question: https://www.reddit.com/r/DataHoarder/comments/scdclm/testing_refs_data_integrity_streams_corrupt_data/

Rationale

The year is 2022, and if you are serious about storing your data, you likely have an impressive NAS setup with ZFS, ECC Ram, etc. I have gone down the ZFS rabbit hole, but I’m still running a gigabit network, and adding a NAS or server to my setup will require me to upgrade to at least 2.5G, otherwise I’m quite performance limited by the network.

In the past, I have explored the option of using ReFS with integrity streams instead, as a ZFS-like replacement, but there are some horror stories out there (check here and here as examples), and I dropped the idea.

With the release of Windows 11, I’m planning on upgrading my desktop so I can run nested virtualization on my AMD Ryzen, and decided to revisit this topic. Can ReFS be trusted with the latest Windows release? Let’s find out!

Setup

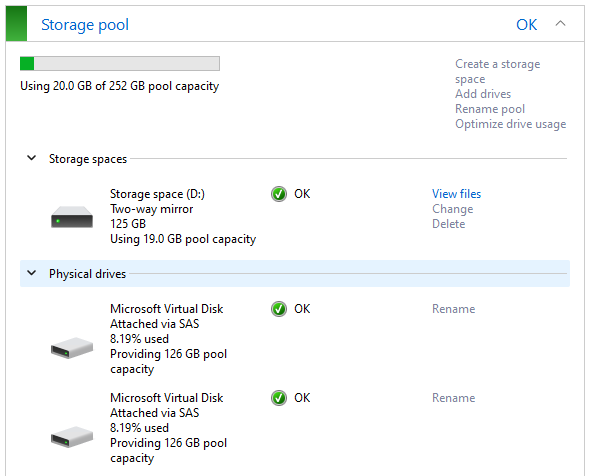

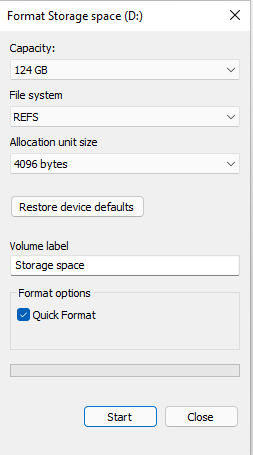

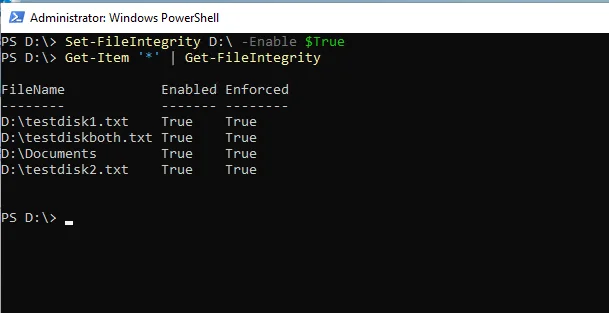

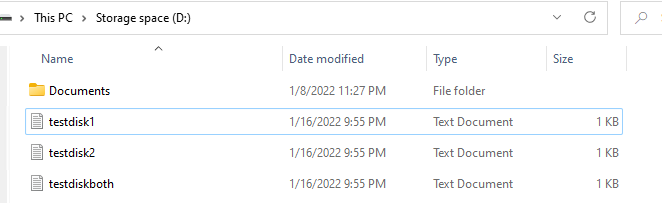

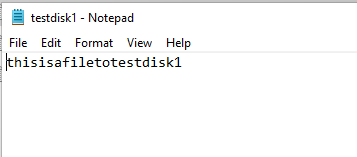

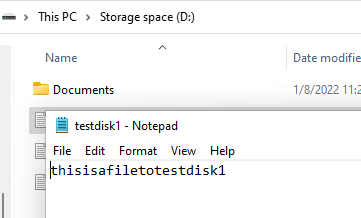

For the purpose of this test, I will keep things simple. I’m going to run a Windows 11 Pro for Workspaces VM, with 2 additional disks attached. These disks will be setup to run a mirror pair in storage spaces, with ReFS and Integrity Streams enabled. In order to simulate issues, I will create 3 text files, and will do the following:

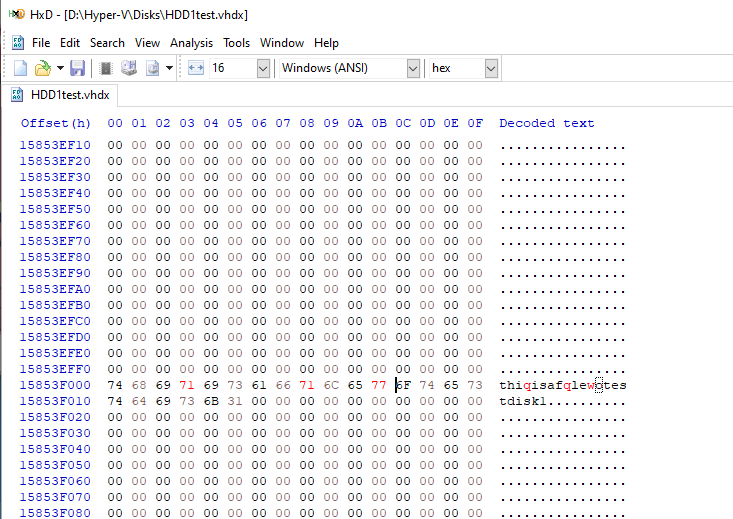

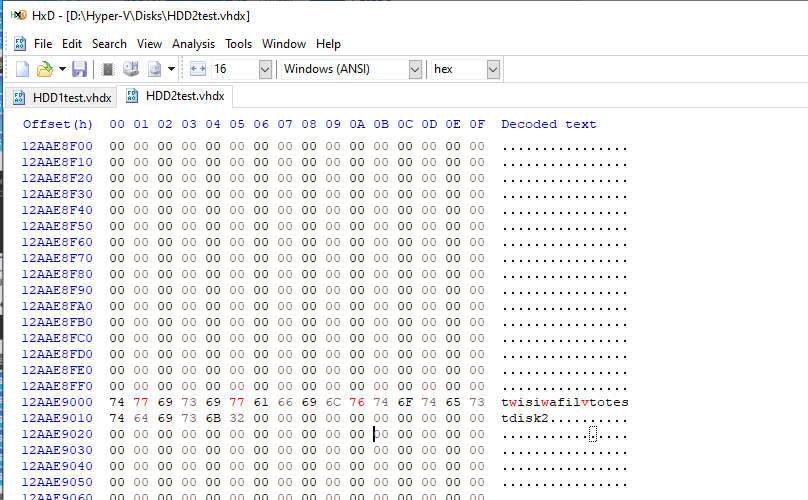

- Scenario A: Flip some bits on the first file on the disk 1

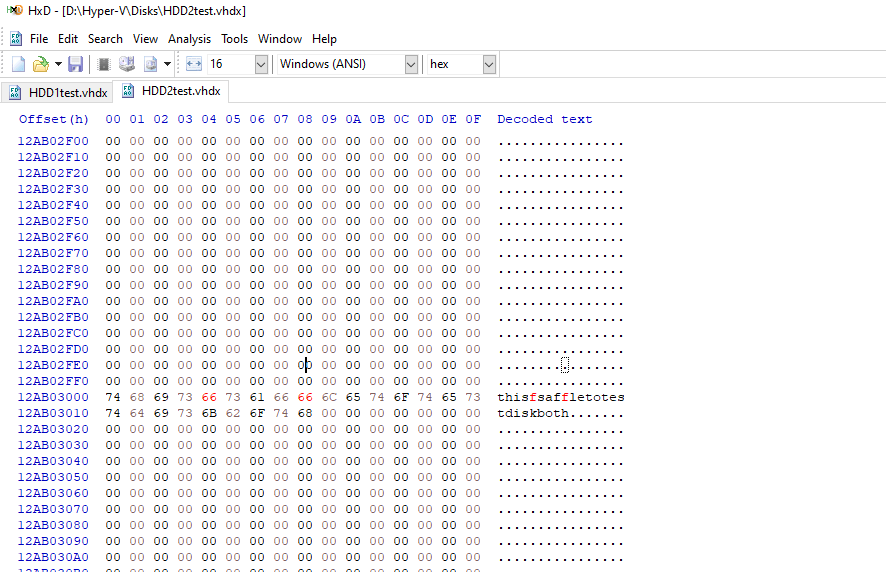

- Scenario B: Flip some bits on the second file on the disk 2

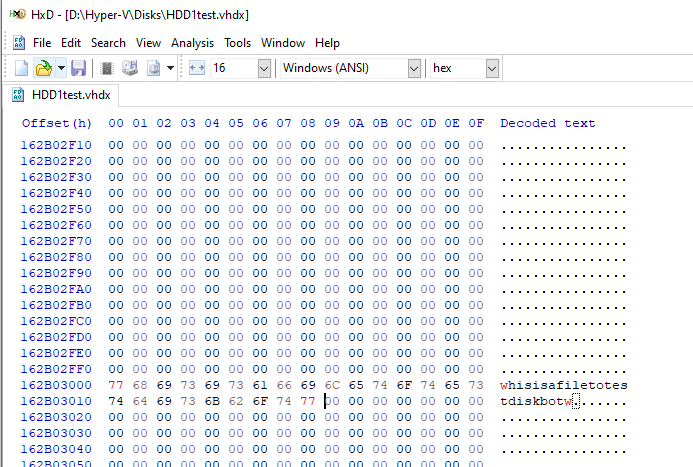

- Scenario C: Flip some bits on the third file on both disks

From Microsoft’s documentation (you can read it here), the expectation is that ReFS will be able to correct Scenario A and B, but will fail on Scenario C. I will be using the default cluster size of 4K as recommended here.

Results

On the first test, I try to open the file 1, and it opens fine, with no signs of corruption.

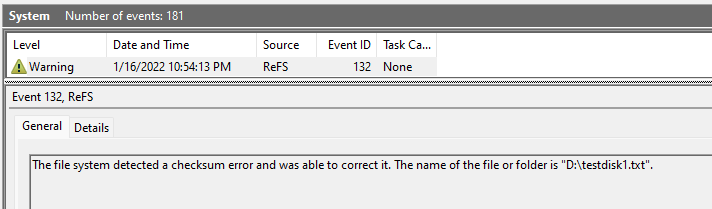

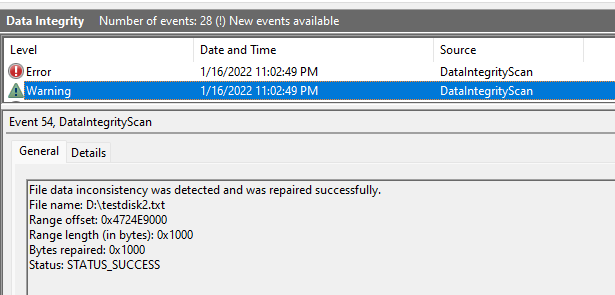

Checking the event viewer, we can see that ReFS was able to automatically fix the file for us. Yay!

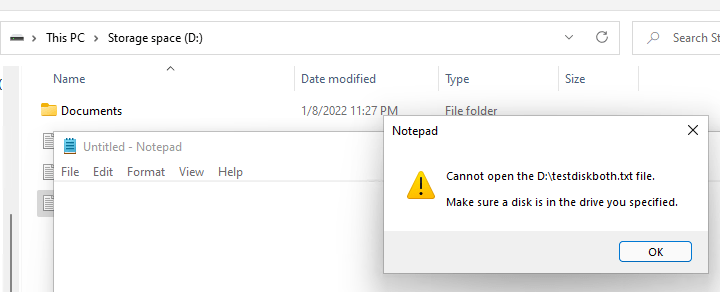

Now, if we try to open the file we broke on both disks, here is what we get.

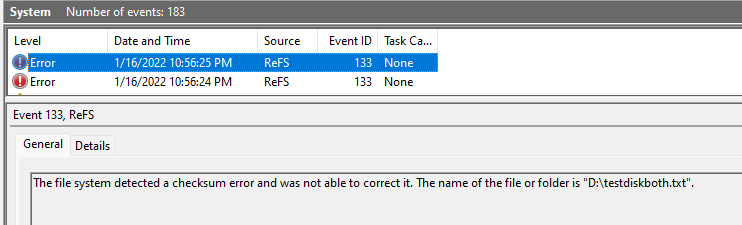

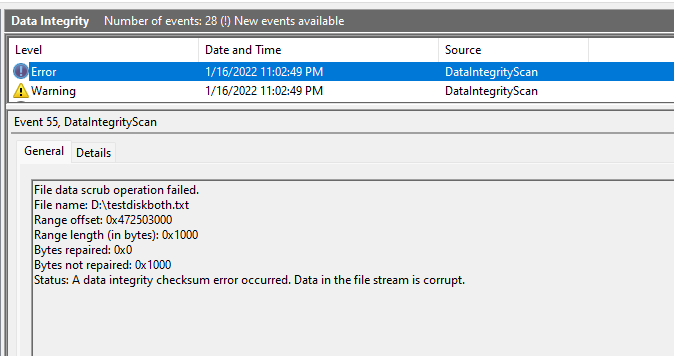

And the corresponding two events (I’m assuming one for each disk that failed checksum).

Note that ReFS did not delete the file (as experienced by other people using parity disks, mentioned earlier in this post. I’m not sure if this is a new behavior or just my luck).

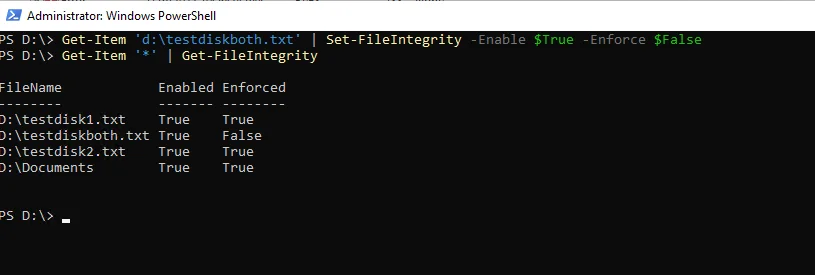

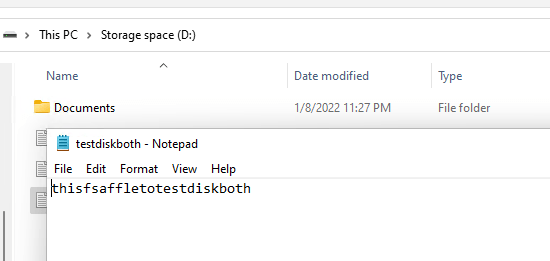

Interestingly enough, since the file did not get removed, I can actually unblock it and still access it by setting the enforce flag to False (you might not want to lose all the content, after all).

As you can see above, the file loads but the corrupted bits remain 🙁

Bonus Content: Enabling Data Scrubbing

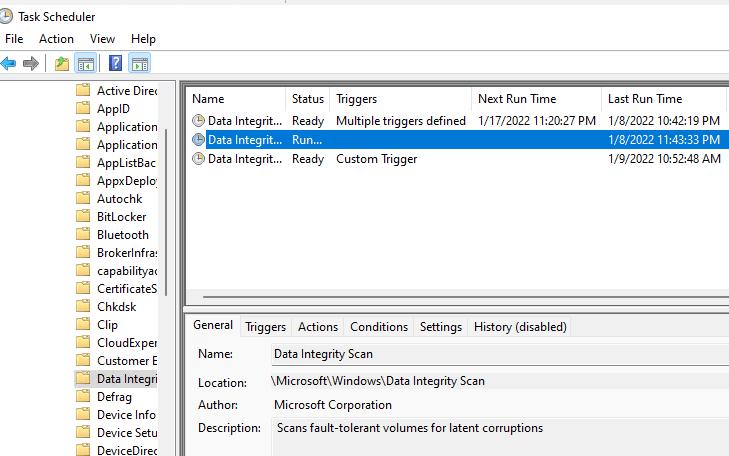

ZFS has a scrubber to help prevent a phenomenon called bit rot. Interestingly enough, ReFS has the equivalent Data Integrity verification task, but it comes disabled by default. You can go to Task Scheduler -> Microsoft -> Windows -> Data Integrity, and pick the 2nd task from the list. Set a weekly schedule and you should be good to go.

Just to try it out and see if it works, I ran it manually, and sure enough, it found the inconsistency on the disk2 file 2 (which I had not opened and triggered the ReFS fix yet), and also the file I corrupted on both disks.

Pretty cool uh?

Long Term Testing

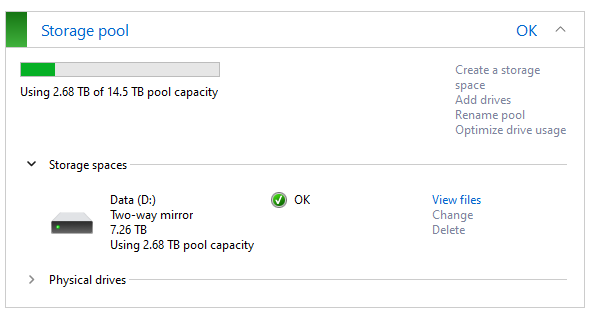

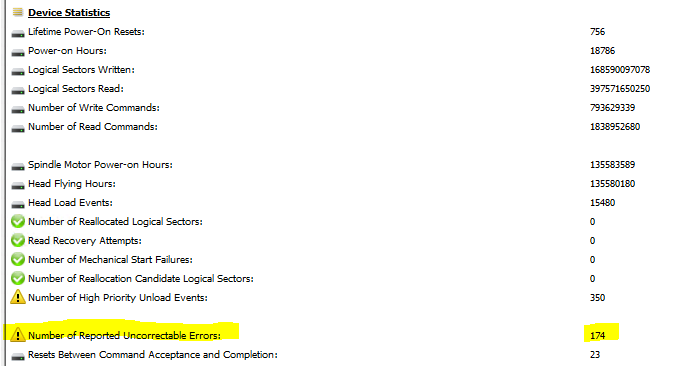

As much as synthetic tests can provide some insight on how the underlying technology operates, I want to put ReFS to test with some real data. I have 2 Seagate SMR disks (if you don’t know about SMR, it’s not a great disk for constant write operations), with one of these disks already failing (174 uncorrected errors reported by S.M.A.R.T.). I will run a few Hyper-V workloads on these disks, with Integrity Streams also enabled. Stay tuned!

Final Thoughts

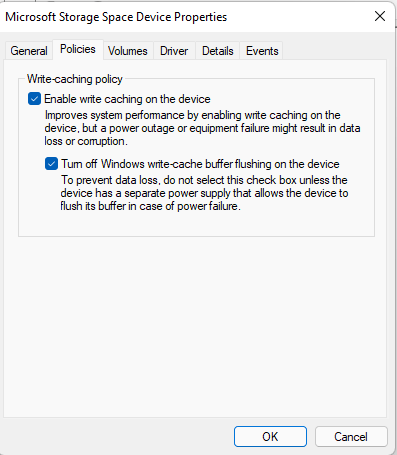

Although this test is far from claiming that ReFS can be trusted to secure your data, it does indicate the filesystem is operating with automatic error correction in place when you enable integrity streams on a mirrored storage spaces. Perhaps another test to run is against a parity volume, but the write performance on those with Storage Spaces tends to be horrendous, but if you decide to do so, feel free to comment and post some results!

Ultimately, a decent backup strategy is needed with any file system you choose, but ReFS can help in those scenarios where there is silent data corruption happening. And of course, if you are truly worried about corruption at all levels, don’t forget to also pair up some ECC memory on this setup.

8 Comments

Thanks for this. I have done some similar tests automated using PowerShell – however under extended tests I found that at present ReFS integrity streams to doesn’t always work the way you found it did, and sometimes actually corrupts good data – be careful about what you store there!

https://www.reddit.com/r/DataHoarder/comments/scdclm/testing_refs_data_integrity_streams_corrupt_data/

Hi Chris,

Thanks so much for bringing this reddit post to my attention! I had originally posted against the older thread on the topic, but I’ve been away from Reddit for a while. Glad to see you pushed the testing further with a Powershell script! Looks pretty awesome!

I must admit I have been using the ReFS solution for a while now (since the time of the posting), but I imagine I’ve been lucky so far, as I did setup a monthly data scrub, and so far no errors. Based on your new findings, this is a ticking timebomb, so I will need to look into it more and see what to do next.

I do have a separate backup solution in case things turn for the worse, but I’ll redo my original testing with more attempts. At an initial glance, the only difference I can see on the testing is that you are mounting the vhdx files inside the VM. In my testing, I kept the vhdx files outside of the VM, turned the whole thing off while flipping bits, and after turning it back on, I tried to either access the corrupted file or run a data scrub to trigger the detection / repair.

In any event, I will add a warning to the top of the post for now, glad you were able to engage with Microsoft, hopefully they do get to the bottom of this and help fix this up for good!

Cheers!

Just thought I’d share my findings too in case of any use:

1. Slow parity volume on Storage Spaces is fixed by aligning interleave size with cluster size / allocation unit size, which results in much significant improvement vs disabling write-cache buffer (that may or may not have visible improvement on different hardware). Specifically:

– Smallest supported interleave size for multi-column = 16kB

– File system cluster size / allocation unit size to be formatted as:

– 5 columns single parity = 16kB x (5-1) = 64kB

– 6 columns dual parity = 16kB x (6-2) = 64kB

– 3 columns single parity = 16kB x (3-1) = 32kB

Reference: https://social.technet.microsoft.com/Forums/en-US/64aff15f-2e34-40c6-a873-2e0da5a355d2/parity-storage-space-so-slow-that-it39s-unusable

2. Storage Spaces with parity ReFS volume over mains-powered USB 5Gbps hub (I know how bad this is, but a great value portable alternative to hardware RAID) can get blue screen (when USB hub resets unexpectedly) induced file system corruption that is beyond what ReFSUtil can salvage even with write-cache disabled on physical disks, an issue that doesn’t seem to affect NTFS.

3. I once accidentally wiped a physical disk that was part of a single parity NTFS volume using “ATA secure erase command” where Storage Spaces happily chummed along regardless without flagging the wiped disk as error until after a manual Windows restart. Files on the NTFS volume had corruptions undetectable by both CHKDSK & data integrity scrubber, so watch out!

Great thanks to Chris’ brilliant work in bringing ReFS integrity issue to Microsoft’s attention, till then the expedition for a truly resilient data storage on Windows continues in 2023 & beyond!

Hi Tatwang, I’m curious about the parity performance. I recall reading the technet discussion back when I was testing it, but never managed to get it working with acceptable performance. In my example, if I try to use parity with 3 disks, what would be the recommended columns / cluster size / allocation size for optimal performance? Willing to test this again, and appreciate your shared insights!

Hi Carlos, 3 disks with single parity look like this on

1. PowerShell: New-VirtualDisk -StoragePoolFriendlyName ” -FriendlyName ” -ResiliencySettingName Parity -Size GB -ProvisioningType Thin -PhysicalDiskRedundancy 1 -NumberOfColumns 3 -Interleave 16KB

2. Format switches: FORMAT volume /FS:NTFS /Q /A:32K

Single 20GB file transfer on 2.5″ 7200 rpm HDDs: (pause immediately after start of transfer to stabilise buffer, then continue transfer to observe speed)

– Single disk without Storage Spaces = 120MB/s read/write

– “Simple volume” with 3 columns, 4kB cluster = 150-210MB/s write, 180MB/s read

– “Single parity” with 3 columns, 32kB cluster = 100-140MB/s write, 130MB/s read

The parity performance tweak appears to be more consistently visible with NTFS & without BitLocker – features like parity, BitLocker, ReFS & integrity stream visibly reduced performance gain from the alignment tweak. It felt like Storage Spaces has overhead consuming IOPS that doesn’t get reduced with faster CPU.

Hope this helps.

Hi Tatwang,

Thanks for providing more details. I looked thru my notes, and now I remember why I ended up giving up on this. As you mentioned, there are performance penalties depending on the setup, and I’ve found that Bitlocker alone would be quite detrimental to the setup.

Once I have more time, I will update this post and perhaps add a separate one with the findings on each configuration mode. Based on what I’ve tested, if the parity data is fairly static, SnapRAID might be a better option in terms of performance, the main drawback being a snapshot solution vs realtime.

Delete test files and run test again. Is write performance still the same? In my case, first write to free space was about 300MB/s on 3x 10TB Single Parity volume. But second run to “same place” was about 30MB/s. Read performance was good all the time, but write was fine only on unallocated sectors.

I tried SnapRAID but wanted to avoid extra manual work to sync parity & scrub integrity; whilst lacking support for storage pooling, disks of different sizes, & data updated more frequently than sync.

Storage Spaces + ReFS + integrity streams would have worked like a dream if Chris Hill’s attempt above eventually succeeds, which I’m counting on it!